Introduction to Language-Image Pre-Training

Language-Image Pre-Training (LIP) has become a popular approach to obtain robust visual and textual representations. It involves aligning the representations of paired images and texts, typically using a contrastive objective. This method was revolutionized by large-scale models like CLIP and ALIGN, which demonstrated the viability of this approach at a massive scale [1]. As the field progresses, researchers continue to seek methods to make LIP more efficient and effective, addressing challenges such as large batch sizes and resource constraints.

The Sigmoid Loss Innovation

Key Problem with Contrastive Learning

Traditional contrastive learning relies on a softmax-based loss function, which requires normalization over the entire batch of image-text pairs. This approach can be computationally expensive and memory-intensive, often necessitating a complex and numerically unstable implementation [1].

Introducing Sigmoid Loss

To address these challenges, researchers at Google DeepMind have proposed a simpler alternative: the pairwise Sigmoid loss for Language-Image Pre-Training, termed SigLIP. Unlike the softmax normalization, the Sigmoid loss operates solely on individual image-text pairs, which simplifies the computation significantly [1].

Benefits of Sigmoid Loss

- Memory Efficiency: The Sigmoid loss requires less memory compared to the softmax-based contrastive loss. This makes it possible to scale up the batch size without increasing computational resources exponentially [1].

- Decoupling Batch Size: By not requiring operations across the full batch, the Sigmoid loss decouples the definition of the task from the batch size, allowing flexibility in training setups [1].

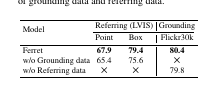

Experimental Validation

A series of experiments demonstrated that SigLIP models outperform their counterparts using softmax loss, particularly in smaller batch sizes. For instance, SigLIP achieved 84.5% zero-shot accuracy on ImageNet with only four TPUv4 chips in two days [1].

Efficient Training With Locked-Image Tuning

SigLiT Model

The team also introduced the SigLiT model, combining the Sigmoid loss with Locked-image Tuning (LiT). This method achieved remarkable efficiency, with the SigLiT model reaching 79.7% zero-shot accuracy on ImageNet in just one day using four TPUv4 chips [1].

Impact on Batch Size and Training Duration

Through extensive testing, researchers found that while larger batch sizes do offer some performance benefits, the gains diminish beyond a certain point. Surprisingly, a batch size of 32k appeared to be almost optimal, balancing performance and resource efficiency [1].

Multilingual and Robust Pre-Training

mSigLIP: Multilingual Adaptation

Expanding the approach to multilingual data, the team pre-trained models on datasets covering over 100 languages. They discovered that a batch size of 32k was also sufficient for effective multilingual training, and going beyond this size didn’t yield significant improvements [1].

Robustness to Noise

Another notable advantage of the Sigmoid loss is its robustness to data noise. Models trained with Sigmoid loss demonstrated a higher tolerance to various types of corruption (e.g., random noise in images or texts), retaining performance superiority over softmax-trained models even under noisy conditions [1].

Conclusion

The introduction of Sigmoid loss for Language-Image Pre-Training marks a significant advance in the efficiency and effectiveness of LIP models. By simplifying the loss computation and decoupling it from batch size requirements, SigLIP and SigLiT models offer compelling performance with reduced computational overhead. These innovations not only facilitate better utilization of limited resources but also present a robust framework adaptable to multilingual contexts and resistant to data noise. This development paves the way for more accessible and scalable language-image pre-training, fostering further exploration and improvement in the field.

By integrating Sigmoid loss, researchers and practitioners can achieve high performance in LIP tasks with optimized resource use, making advanced AI more accessible and practical for diverse applications [1].